Whale Waking Up? The Deepseek Paradox and the 2026 AI Horizon

In the high-stakes theater of global computation, silence is rarely empty; it is usually a sign of compilation. For the better part of late 2025, the repository activity for Hangzhou-based Deepseek was conspicuously quiet. The commit logs slowed. The white papers ceased. To the casual observer, it appeared the startup, which had disrupted the open-source ecosystem with its V3 model, had hit a plateau.

Related Content

- Moonshot AI’s K2: The Disruptor Redefining the AI Race in 2025

- DeepSeek's May 2025 R1 Model Update: What Has Changed?

- Grok 3: What It Means for the Top US AI Labs (and DeepSeek)

- Why has DeepSeek Rattled the Traditional AI Labs: A Paradigm Shift

Explore Lexicon Labs Books

Discover current releases, posters, and learning resources at http://lexiconlabs.store.

Conversion Picks

If this AI topic is useful, continue here:

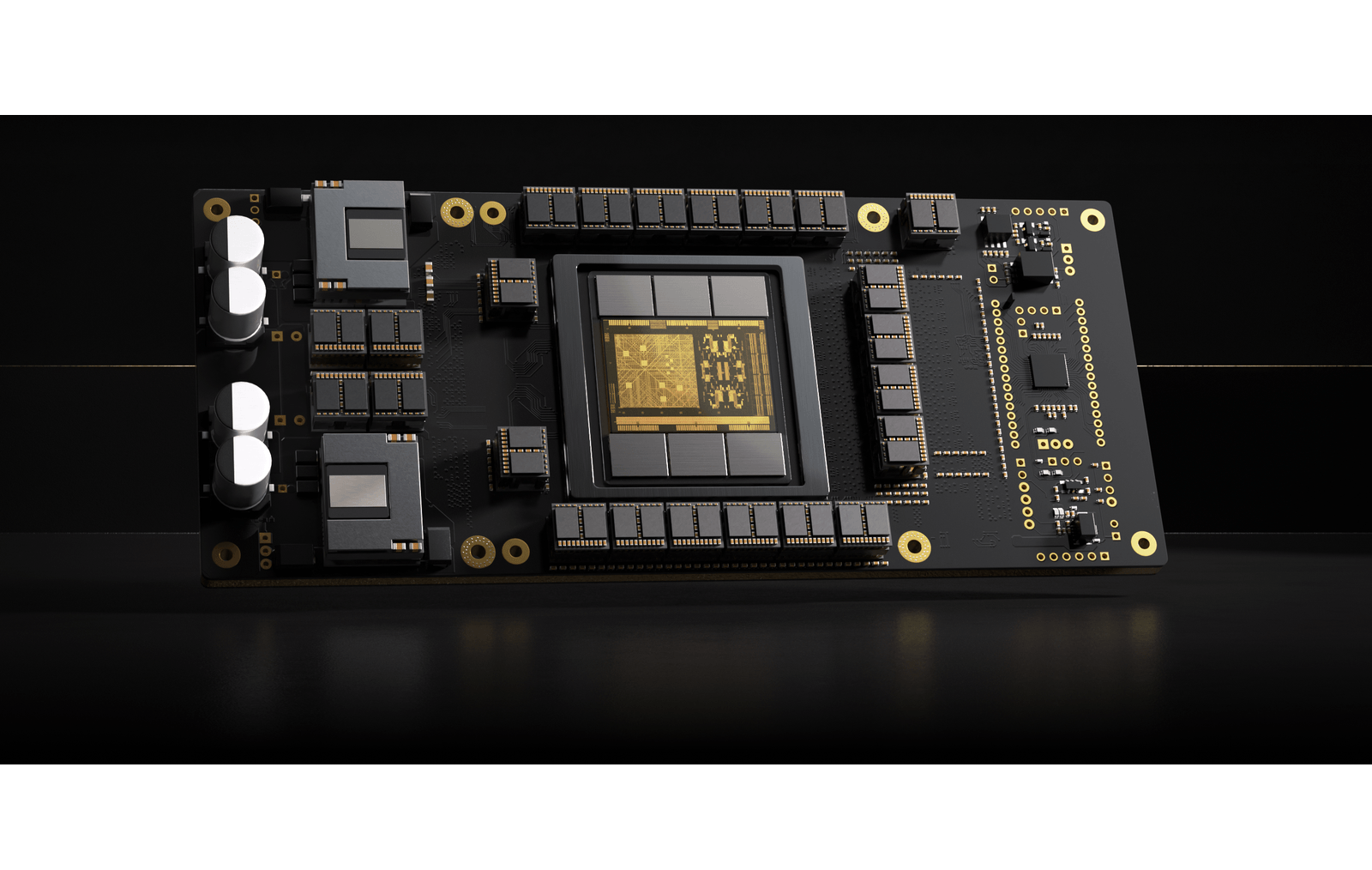

Figure 1: The "Whale" isn't sleeping; but what is it huilding?

This assumption was a mistake. In the algorithmic arms race, silence often indicates a pivot from optimization to architectural overhaul. The "whale"—Deepseek’s logo and internal moniker—was not sleeping. It was learning to reason.

As we enter 2026, leaks and preprint whispers suggest Deepseek is preparing to release a model that does not simply compete on the axis of "tokens per second" or "price per million." Instead, they are targeting the one metric that Western labs believed was their moat: high-order cognitive reasoning and code synthesis under extreme hardware constraints. The implications for the global AI ecosystem are not just commercial; they are geopolitical.

The Constraint Engine: Why Scarcity Bred Innovation

To understand what is coming next, one must understand the environment that forged it. For three years, Chinese AI laboratories have operated under the shadow of stringent export controls on high-performance semiconductors. While Silicon Valley scaled up with clusters of H100s and B200s, engineers in Hangzhou and Beijing were forced to play a different game.

They could not rely on brute force. When compute is scarce, code must be elegant. This constraint forced Deepseek to perfect the Mixture-of-Experts (MoE) architecture long before it became the standard in the West. They learned to activate only a fraction of their parameters for any given inference, keeping energy costs low and throughput high.

The rumors regarding their 2026 flagship—codenamed "Deepseek-R" (Reasoning)—suggest they have applied this efficiency to the "System 2" thinking process. If OpenAI’s o1 model demonstrated that giving a model time to "think" yields better results, Deepseek’s counter-move is to make that thinking process mathematically cheaper. The goal is not just a smarter model; it is a smarter model that can run on consumer-grade hardware.

Rumored Capabilities: The 2026 Spec Sheet

While official specifications remain under NDA, analysis of GitHub commits and chatter on Hugging Face suggests three distinct capabilities that define this new generation.

1. Multi-Head Latent Attention (MLA) at Scale

The bottleneck for long-context reasoning has always been Key-Value (KV) cache memory. As a conversation grows, the memory required to track it expands linearly. Deepseek pioneered MLA to compress this cache. The 2026 model reportedly pushes this compression to a 100:1 ratio. This means a user could feed the model an entire codebase, or the collected works of a legal precedent, and the model could "hold" that context in active memory on a single GPU.

2. The "Coder-Reasoner" Hybrid

Previous models treated coding and creative writing as separate domains. The new Deepseek architecture treats code as the language of logic. It reportedly translates complex logic problems into pseudo-code intermediates before solving them. By using code execution as a "scratchpad" for its own thoughts, the model reduces hallucination rates in math and logic tasks significantly. It doesn't just guess the answer; it computes it.

3. Auxiliary Loss-Free Load Balancing

In standard Mixture-of-Experts models, a "router" decides which experts to use. Often, the router becomes biased, overusing some experts and ignoring others. Deepseek has reportedly solved this with a load-balancing technique that ensures every parameter in the neural network earns its keep. The result is a model that is "dense" in knowledge but "sparse" in execution costs.

The Competitive Terrain: China’s "Big Five"

Deepseek does not operate in a vacuum. It is the tip of a spear in a fiercely competitive domestic market. The "War of a Hundred Models" that characterized 2024 has consolidated into an oligopoly of five key players, each carving out a distinct strategic niche.

1. Deepseek (The Disruptor)

Strategic Focus: Open Source & Algorithm Efficiency.

Deepseek plays the role of the insurgent. By open-sourcing models that rival GPT-4 and Claude, they undercut the business models of proprietary giants. Their strategy is commoditization: make intelligence so cheap that no one can build a moat around it. They are the favorite of the developer class because they provide the weights, the code, and the methodology.

2. Alibaba Cloud / Qwen (The Infrastructure Utility)

Strategic Focus: Enterprise Integration & Multimodality.

The Qwen (Tongyi Qianwen) series is less about "chat" and more about "work." Alibaba has aggressively integrated Qwen into DingTalk (their version of Slack) and their cloud infrastructure. Qwen excels at visual understanding and document analysis. If Deepseek is the researcher, Qwen is the office manager. Their goal is to be the operating system of Chinese business.

3. Baidu / Ernie (The Old Guard)

Strategic Focus: Search & Consumer Application.

Baidu was the first mover, and they bear the scars of it. The Ernie (Wenxin Yiyan) model faces skepticism from the technical elite but holds massive distribution power through Baidu Search. They are betting on "agentic" workflows—ordering coffee, booking travel, managing calendars—rather than raw coding prowess. Baidu aims to be the interface layer, not the compute layer.

4. 01.AI (The Unicorn)

Strategic Focus: The "Super App" Ecosystem.

Led by Dr. Kai-Fu Lee, 01.AI is the most Silicon Valley-esque of the group. They focus on consumer applications that "delight." Their model, Yi, is known for its high-quality English-Chinese bilingual capabilities. They are targeting the global market, attempting to build a bridge product that serves both East and West, focusing on mobile-first productivity.

5. Tencent / Hunyuan (The Social Fabric)

Strategic Focus: Gaming, Media & WeChat.

Tencent was late to the party, but they own the venue. With WeChat, they control the digital lives of a billion people. Hunyuan is being trained on a dataset no one else has: the social interactions of an entire nation. Their focus is on generative media—images, 3D assets for gaming, and conversational avatars. They are building the metaverse engine.

The Geopolitical Calculus

The emergence of a reasoning-capable model from Deepseek challenges the prevailing narrative of semiconductor determinism. The theory was that by restricting access to the absolute cutting edge of silicon (NVIDIA's latest), the West could freeze China’s AI development in place.

That theory is failing.

By forcing engineers to optimize for older or less powerful chips, the sanctions inadvertently cultivated a culture of algorithmic efficiency. While US labs burn gigawatts training larger and larger dense models, Deepseek is refining the art of doing more with less.

If the 2026 rumors hold true, we are about to witness a bifurcation in the AI path. One path leads to massive, energy-hungry omni-models controlled by three American hyper-scalers. The other path, carved out by the "whale" in Hangzhou, leads to efficient, modular, code-centric intelligence that runs on the edge.

The whale is waking up. And it speaks Python.

Key Takeaways

- Efficiency over Scale: Deepseek’s 2026 strategy focuses on algorithmic density (MLA, MoE) rather than raw parameter size, largely due to hardware constraints.

- Reasoning as a Commodity: The new "Deepseek-R" aim is to democratize "System 2" thinking (Chain of Thought) at a fraction of the inference cost of US competitors.

- The Coding Core: Future models will use code execution as an internal scratchpad for logic, reducing hallucination in complex tasks.

- The Big Five Oligopoly: The Chinese market has stabilized around Deepseek (Open Source), Alibaba (Infrastructure), Baidu (Search), 01.AI (Mobile/Consumer), and Tencent (Social/Media).

- The Sanction Backfire: Export controls have accelerated Chinese innovation in software architecture to compensate for hardware deficits.

Read our complete biography titled Elon: A Modern Renaissance Man

Stay Connected

Follow us on @leolexicon on X

Join our TikTok community: @lexiconlabs

Watch on YouTube: Lexicon Labs

Newsletter

Sign up for the Lexicon Labs Newsletter to receive updates on book releases, promotions, and giveaways.

Catalog of Titles

Our list of titles is updated regularly. View our full Catalog of Titles

Stay Connected

Follow us on @leolexicon on X

Join our TikTok community: @lexiconlabs

Watch on YouTube: @LexiconLabs

Learn More About Lexicon Labs and sign up for the Lexicon Labs Newsletter to receive updates on book releases, promotions, and giveaways.

.jpg)